An effort to clarify the role of deliberative evaluation in the planning and policy-making process. Thorbjoern Mann, May 2020.

INSIGHTS / IMPROVEMENT SUGGESTIONS: CONCLUSIONS?

The two dozen blogposts over past few months try to explore the many facets of deliberative evaluation as it relates to the planning discourse. Necessarily, the issue-by-issue treatment does not do justice to all the connections and relationships between them. Many questions that call for more exploration, testing and research were raised, but of course not resolved. Faced with public planning tasks today, we always have to make decisions based ‘on the best of our current incomplete knowledge’, so it seems appropriate to try to summarize that current state of knowledge. What can be learned from this exploration? The following notes highlight a few insights for discussion.

No ‘universal’ common approach

The first answer to this question may sound disappointing: There are so many different attitudes, perspectives, situations and tasks involved in planning that any suggestion of a ‘standard’ common approach or procedure would be rather inadequate to the specific conditions of each case, in one way or another. Therefore, it would be pointless to try to make general recommendations about details for specific approaches. They would be of the kind of ‘if approach or technique X is used, it should be done with specific details x1,x2, etc.” Those recommendations should be included in the specifications for individual techniques in the tool kit. The only meaningful ‘general’ rule should be to coordinate those agreements with tools used in other phases of a project, for the sake of consistency and avoiding confusion due to too many different jargon terms and rules.

Critical issues

In the course of the discussion, the initial set of issues calling for discussion had to be revised. Some questions emerged as more controversial and difficult to reconcile than others. They involve what seem to be fundamental theoretical objections to systematic ‘methods’ of evaluation, or simply efforts to sidestep the question since it is seen as unnecessary cumbersome addition to planning project. The first of these positions rests on the confidence that a valid theory applied to the process of generating planning or policy proposals will make evaluative scrutiny unnecessary; the second on belief in such concepts as ‘wisdom of crowds’, or the superior ability of intuition — of policy developers, of participants in the discourse, or of ‘leaders’ making the decision.

A related question arises from the practice of a number of ‘management consulting’ approaches that rely on facilitator-guided small group events aiming at consensus or consent decisions or recommendations for single solutions generated by a theory (such as the Pattern Language) or orchestrated discussion. Such groups usually consist (in the case of organizations contracting with an outside consultant firm) of selected company employees with special skills or detailed knowledge of the problems to be remedies. The ‘decisions’ reached then become recommendations to the organization’s management. If such approaches are suggested for larger public projects, they would take the form of ‘expert’ panels informed of the public’s concerns through surveys or interviews but reaching their recommendations in the small group discussions but usually not involving any formal systematic evaluation procedure. To allow for a greater degree of public participation, it would become necessary to construct a hierarchical structure of small face-to-face group ‘circles’ (to adopt the vocabulary of one such approach. Each higher hierarchy level of circles consists of representatives of the lower circles, using the same approach or facilitating mode to process the results of the lower circles into recommendations for the respectively next higher level. This problem constitutes one of several strong arguments for an ‘asynchronous’ online but ‘flatter organization of the discourse, which is precisely the aim of the overall ‘public planning discourse support platform’ for which new forms of discourse orchestration and decision-making are needed.

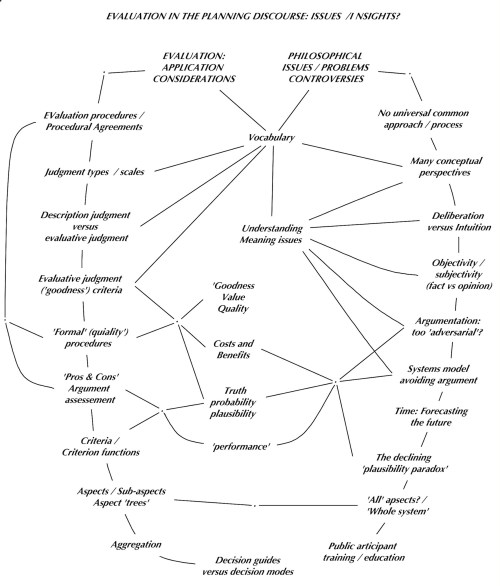

The map of critical issues in the diagram resulting from these insights had to be revised, showing the elements of evaluation on one side and the issues arising from different views about the role of evaluation in the planning process on the other.

Figure 1 — Issues and Controversies, Revised

Embedding a ‘toolbox’ of specific techniques in an overall framework

The needed systems of technological support of a general planning discourse platform or forum with wide ‘asynchronous’ public participation for larger projects will have to adopt some common assumptions, agreements, and vocabulary. Some such agreements are of course needed for any small or large project, whether based on F2F interactions or not. Any platform will imply some such agreements, and this poses a significant challenge to its design: to keep a delicate balance between those necessary agreements and the need to accommodate different views even about such initial provisions. One key lesson from the exploration is that there is a large variety of perspectives on which agreements would rest. The platform should not impose one such perspective but must remain flexible, open to the variety of views and preferences participants may bring to the table. It should focus on reaching common agreements for each project, as an integral project task, based on decisions by each project’s participants.

The overall framework must therefore be as simple and inviting to potential participants as possible. As people become more familiar with the platform, it can then offer guidance and opportunities for selecting special techniques and methods from a ‘tool kit’ collection techniques and tools to facilitate in-depth analysis and evaluation of the particular issues arising in different projects, as needed in the perception of participants. The choices should include the option of reaching recommendations and decisions without any explicit systematic deliberation. This, of course, raises questions about what would make decisions legitimate and compelling for the affected populations, and what responsibilities or ‘accountability’ provisions it would raise for the respective decision-makers. The idea of using the ‘currency’ of ‘discourse merit points’ to require decision-makers to pay for decisions begins to address this issue.

Figure 2 — A ‘basic’ (neutral’) planning process with evaluation as an optional ‘toolbox’ element

Procedural agreements and process

The need for flexibility can be accommodated with the provisions for the procedures to be followed to reach a decision. The diagram below shows one example of a basic framework, drawn from the tradition of parliamentary procedure that will be familiar to most people in countries with parliamentary-type governance. The key feature is the ‘Next step?’ motion that can be raised at appropriate times during the discourse, that can call for a decision, etc. but also for the implementation of a ‘special technique’ for more thorough analysis.

Translation services language-language and disciplinary jargon to conversational language

Many problems facing humanity today are ‘international or even ‘global’, with affected parties living in areas governed by many different government entities, speaking different languages. Thus, a general platform for the treatment of such projects must provide adequate translation services between different natural languages, as a matter of course. But since the discourse will draw on scientific and professional knowledge from many disciplines (and consulting firms), it will also need ‘translation from the ‘discipline jargon’ of the contributing experts.

The argument against ‘argumentation’ as unnecessarily ‘argumentative’ and adversarial

The investigation was largely motivated by the initial question of how to evaluate the merit of arguments in what Rittel called the ‘Argumentative Model of Planning’. The objections against the very word ‘argument’ can of course be dismissed as misunderstanding the meaning of the term: It is not ‘fighting word’ implying a basically adversary attitude but an offer to reasoning, — a reason –showing how a position either for or against a proposal will ‘follow from’ or can be supported by premises that the audience already accepts or will come to accept upon being show further evidence.

The existence of such misunderstanding must be acknowledged as a potentially significant and destructive factor in the planning process. I have suggested to clarify the distinction with a different abel such as ‘quarrgument’ for the kind of exchanges leading to adversarial-only verbal or physical ‘quarrels’. But a better option is perhaps to avoid the term entirely, with a provision to immediately replace an argument (if one is entered into a discourse) with the questions about the premises used. Instead of the ‘argument’ version of an entry like:

“Plan A will cause effect B, given conditions C,

B is desirable, and

C will be present”

(which may be ‘stored for reference in the ‘Verbatim’ record of the platform), the displays for the assessment will show the questions:

– Will A cause B, given conditions C)?

– Should effect B be aimed for? and

– Will conditions C be present?

The aggregation into argument plausibility, argument weight, and plan plausibility follow the same steps as those shown in the section of planning argument evaluation but skip the display of arguments plausibility and weight, to hide the controversial term.

The ‘subjective judgment versus objective fact and measurement’ controversy

The discussion of evaluation here cannot offer a ‘resolution’ of the controversy whether design and planning decisions should be based on subjective (intuitive) judgments or objective (‘rational] measurement-based ‘facts’, nor how to distinguish between these kinds of judgments. The recommendation is — for the time being and for the sake of effective process in given practical situations — to leave the controversy aside. Instead, whenever there arises a situation in which a decision-maker is asked to or claims to make decisions ‘on behalf’ of other affected parties — to call for the mutual explanation of the respective bases of judgment: explanation to the satisfaction of the other party, not to some theoretical standard or expert opinion. This may shift the issue to the realms of general research, education or public information. It may be in need of research and clarification in those domains, — but cannot be settled separately in the area of planning.

Claims of validity of planning and decision-making methods

The investigation of evaluation in the planning process was motivated by a sense that the planning decision-making process is in need of improvement (especially with respect to evaluation) and a sense that some improvement is possible. This should not be taken as a claim of being a ‘more perfect’ approach. Rather, the insights from the review suggest that such claims would be pretentious and inadvisable. As just one example, consider the expectation that a planning decision should be based on ‘due consideration’ (and thorough evaluation) of ‘all the pros and cons’ about a planning proposal, as a leader may solemnly promise. It may seem plausible at first sight, but it was seen that it is difficult if not impossible to be certain that ‘all’ those arguments — all potential evaluation aspects — have been or even can be identified. From a systems modeling perspective, the question of the proper (acceptance) of the boundary of the system at hand, is a matter of the system modeler’s judgment more than the system’s ‘true’ properties. The pressure to justify model assumptions with data leads to a preoccupation with past data and measurable variables, over future unknown possibilities, new research knowledge and subjective motivations.

Argumentation as practiced in ‘parliamentary’ discourse predominantly deals with ‘qualitative’ effects: an argument that ‘plan x will achieve a precise quantitative outcome y of variable v in a specific time frame t ‘ is not nearly as plausible as the general but vague qualitative version that ‘Plan x will, in time, improve things with respect to v’. And the qualification of planning arguments ‘given circumstances or conditions C’, if taken seriously, will call for an interminable systems analysis of the arguments’s complex context. Realization of such interminable complexity will quickly nudge participants to end more thorough scrutiny of these questions: understandable and perhaps even defensible, but not justifying claims of ‘perfect’ method. But should such questions arise — and they arguably should sometimes be encouraged — systems modeling and data analysis, diagrams and visual mapping can enhance participants’ understanding, and should be offered as needed in the discourse. By the same token: the possibility of systematic assessment e.g. or arguments will necessitate weeding out repetitive entries in displays and worksheets to the discourse, which can improve overview and understanding.

A further warning to avoid ‘obvious’ confidence in premature judgments must be seen in the many different forms of aggregating both personal and group judgments into decision guides or indicators — they should not even be called and misused as ‘decision criteria’.

Requirements for acceptance: training, education

Even with the best efforts for making the basic framework as simple and understandable to lay participants as possible, the variety of possible attitudes, expectations, assumptions and corresponding techniques and tools raises the question of accessibility for as many segments of communities as may be affected by planning projects and the problems they aim to address. How can the average person comfortably participate in the planning discourse if the concepts, language, tools and needed procedural agreements are unfamiliar and thus confusing? Even this ‘average’ expression is ‘wrong’: don’t crises and emergencies tend to affect and hurt poorer, less educated people more than even the ‘average’ members of the community? But it is the information of those people that is needed to properly address their concerns.

Traditionally, a main task of public education is to prepare citizens for the planning and political discourse. It is not likely that the needed understanding and skills required for even basic participation in the kind of online asynchronous planning and policy-making process sketched out in the proposed planning discourse platform and its provisions for evaluation are offered by current education systems. And the prospect of getting the bureaucracies of all the world’s education systems, whether public or private, to include this material in its curricula itself looks like a planning project of unprecedented magnitude and complexity. So it seems that the task of education and training all potential users as well as the needed staff for the platform calls for radically new approaches. Would an online ‘planning game’, based on a simple version of the process, run on cellphones that are increasingly available even in poor communities be a better step towards this task? (This idea was tentatively explored in a paper on Academia.edu). The challenge of education and training itself might be the first project serving as the necessary test case and experiment, fueled and funded by not much more than all the consultant’s competitive desire to have their approach included in the ‘tool kit’ of a simple common overall platform and process.

–o–

Comments / Unresolved Questions:

Who makes or should make evaluation judgments?

The summary does not address the issue of who will or should make the evaluation judgments needed in any version of a revised planning discourse platform. The answers for this may be ‘given’ in projects entirely covered by the constitutional or institutional governance structure in place, and the results of any deliberation process serving as recommendations to the final decision-makers responsible, not as their mandatory decision criteria. But aren’t many projects controversial precisely because that responsibility or right is being challenged — by the nature of the problem, or its affecting groups in different (possibly already competing or traditionally ‘adversarial’ governance entities? The question may be considered as beyond the scope of this review of evaluation. But the review has identified features of the planning process that imply evaluation judgments ‘rights’ or roles that can be seen as challenging traditional decision-making rights and responsibilities: the design of the platform i s a political matter, and should be put up for discussion as such.

Specific examples of questions of the suggestions for evaluation that determine platform design include the following: The assumption of wide public participation both in assembling the information that calls for ‘due consideration’ and in making evaluation judgments about those; the issue whether only ‘discourse participants’ (who have entered comments or information at earlier discourse stages) should have such evaluation ‘judgment’ rights, or whether the results of the discourse deliberation must be put to a ‘vote’ (for example) by the entire public including people who have not given any consideration to the issue; the issue of ‘group aggregation’ functions to generate ‘group’ decision indicators or guides from individual judgments; and last not least how to proceed when the deliberation outcome such as the individual or ‘group’ proposal plausibility scores end up too close to the ‘zero’ midpoint (‘don’t know, can’t decide’) score even for immensely important decisions (the phenomenon discussed in the ‘plausibility paradox’ section.)

Another issue that has not been adequately explored is the potential role of AI tools, for example in the assessment of ‘supporting evidence’ of planning arguments. It was pointed out that deontic (‘ought’) premises can only be judged by people affected in some way by the problem at hand, and that ‘supporting evidence’ for those premises will inevitably consist of further arguments with the same structure as the original planning argument, with further deontic premises. But can the ‘factual’ logic and data-supported evidence that can be supplied and assessed by AI algorithms for the factual and factual-instrumental premises become so dominant in the presentation of the evidence that it ‘overwhelms’ the consideration of the deontics involved — to the point where ‘technocratic’ planners explicitly demanded that planning decisions should be based on ‘facts’ (only)?

These added considerations underscore the need for more discussion of these issues: they are not adequately resolved in the proposals for a better planning discourse platform any more than in current practice.