The following study will try to explore the possibility of combining the contribution of ‘Systems Thinking’ 1 — systems modeling and simulation — with that of the ‘Argumentative Model of Planning’ 2 expanded with the proposals for systematic and transparent evaluation of ‘planning arguments’.

Both approaches have significant shortcomings in accommodating their mutual features and concerns. Briefly: While systems models do not accommodate and show any argumentation (of ‘pros and cons’) involved in planning and appear to assume that any differences of opinion have been ‘settled’, individual arguments used in planning discussions do not adequately convey the complexity of the ‘whole system’ that systems diagrams try to convey. Thus, planning teams relying on only one of these approaches to problem-solving and planning (or any other single approach exhibiting similar deficiencies) risk making significant mistakes and missing important aspects of the situation.

This mutual discrepancy raises the suggestion to resolve it either by developing a different model altogether, or combining the two in some meaningful way. The exercise will try to show how some of the mutual shortcomings could be alleviated — by procedural means of successively feeding information drawn from one approach to the other, and vice versa. It does not attempt to conceive a substantially different approach.

Starting from a very basic situation: Somebody complains about some current ‘Is’-state of the world (IS) he does not like: ‘Somebody do something about IS!’

The call for Action (A plan is desired) raises a first set of questions besides the main one: Should the plan be adopted for implementation: D?:

(Questions / issues will be italicized. The prefixes distinguish different question types: D for ‘deontic or ‘ought-questions; E for Explanatory questions, I for Instrumental of actual-instrumental questions, F for factual questions; the same notation can be applied to individual claims):

Traditional approaches at this stage recommend doing some ‘research’. This might include both the careful gathering of data about the IS situation, as well as searching for tools, ‘precedents’ of the situation, and possible solutions used successfully in the past.

At this point, a ‘Systems Thinking’ (ST) analyst may suggest that, in order to truly understand the situation, it should be looked at as a system, and a ‘model’ representing that system be developed. This would begin by identifying the ‘elements’ or key variables V of the system, and the relationships R between them. Since so far, very little is known about the situation, the diagram of the model would be trivially simple:

(IS) –> REL –> (OS)

or, more specifically, representing the IS and OS states as sets of values of variables:

{VIS} –> REL(IS/OS) –> {VOS}

(The {…} brackets indicate that there may be a set of variables describing the state).

So far, the model simply shows the IS-state and the OS-state, as described by a variable V (or a set of variables), and the values for these variables, and some relationship REL between IS and OS.

Another ST consultant suggests that the situation — the discrepancy between the situation as it IS and as it ought to be (OS), as perceived by a person [P1] may be called a ‘problem’ IS/OS, and to look for a way to resolve it by identifying its ‘root cause’ RC :

E(RC of IS)? What is the root cause of IS?

and

F(RC of IS)? Is RC indeed the root cause of IS?

Yet another consultant might point out that any causal chain is really potentially infinitely long (any cause has yet another cause…), and that it may be more useful to look for ‘necessary conditions’ NC for the problem to exist, and perhaps for ‘contributing factors’ CF that aggravate the problem once occurring (but don’t ’cause’ it):

E(NC of IS/OS)? What are the necessary conditions for the problem to exist?

F(NC of IS/OS)? Is the suggested condition actually a NC of the problem?

and

E(CF of IS/OS) What factors contribute to aggravate the problem once it occurs?

F(CF of IS/OS)?

These suggestions are based on the reasoning that if a NC can be identified and successfully removed, the problem ceases to exist, and/or if a CF can be removed, the problem could at least be alleviated.

Either form of analysis is expected to produce ideas for potential means or Actions to form the basis of a plan to resolve the problem and can be put up for debate. As soon as such a specific plan of action is described, it raises the question:

E(PLAN A)? Description of the plan?

and

D(PLAN A)? Should the plan be adopted / implemented?

The ST model-builder will have to include these items in the systems diagram, with each factor impacting specific variables or system elements V.

RC –> REL(RC-IS) –> {V(IS)}

{NC} –> REL(NC-IS) –> { V(IS) } –> REL –> {V(OS)}

{CF} –> RELCF-IS) –> {V(IS)}

Elements in ‘{…}’ brackets denote sets of items of that type. It is of course possible that one such factor influences several or all system elements at the same time, rather than just one. Of course, Plan A may include aspects of NC, CF, or RC. If these consist of several variables with their own specific relationships, they will have to be shown in the model diagram as such.

An Argumentative Model (AM) consultant will insist that a discussion be arranged, in which questions may be raised about the description of any of these new system elements and whether and how effectively they will actually perform in the proposed relationship.

Having invoked causality, questions will be raised about what further effects, ‘consequences’ CQ the OS-state will have, once achieved; what these will be like, and whether they should be considered desirable, undesirable (the proverbial ‘unexpected consequences’ or side-effects, or merely neutral effects. To be as thorough as the mantra of Systems Thinking demands, to consider ‘the whole system’, that same question should be raised about the initial actions of PLAN A: It may have side-effects not considered in the desired problem-solution OS: should they be included in the examination of the desired ‘Ought-state? So:

For {OS} –> {CQof OS}:

E(CQ ofOS)? (what is/are the consequences? Description?)

D(CQofOS)? (is the consequence desirable/ undesirable?)

For —> CQ of A:

E(CQ of A)?

and

D(CQ of A)?

For the case that any of the consequence aspects are considered undesirable, additional measures might be suggested, to avoid or mitigate these effects, which then must be included in the modified PLAN A’, and the entire package be reconsidered / re-examined for consistency and desirability.

The systems diagram would now have to be amended with all these additions. The great advantage of systems modeling is that many constellations of variable values can be considered as potential ‘initial settings’ of a system simulation run, (plan alternatives) and the development of each variable can be tracked (simulated) over time. In any system with even moderate complexity and number of loops — variables in a chain of relationships having causal relationships of other variables ‘earlier’ in the chain — the outcomes will become ‘nonlinear’ and quite difficult and ‘counter-intuitive’ to predict. Both the possibility of inspection of the diagram showing ‘the whole system’ and the exploration of different alternatives contribute immensely to the task of ‘understanding the system’ as a prerequisite to taking action.

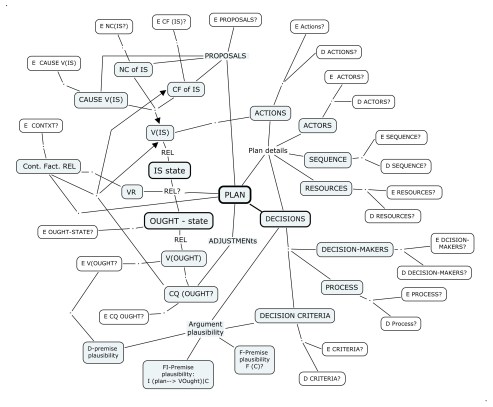

While systems diagrams do not usually show either ‘root’ causes, ‘necessary conditions’, or ‘contributing factors’ of each of the elements in the model, these will now have to be included, as well as the actions and needed resources of PLANS setting the initial conditions to simulate outcomes. A simplified diagram of the emerging model, with possible loops, is the following:

(Outside uncontrolled factors (context)

/ / | | | \ \ \

PLAN->REL -> (RC, NC, CF) -> REL -> (IS) -> REL -> (OS) -> REL ->(CQ)

\ \ \ | | | / / /

forward and backward loops

A critical observer might call attention to a common assumption in simulation models — a remaining ‘linearity’ feature that may not be realistic: In the network of variables and relationships, the impact of a change in one variable V1 on the connected ‘next’ variable V2 is assumed to occur stepwise during one time unit i of the simulation, and the change in the following variable V3 in the following time unit i+1, and so on. Delays in these effect may be accounted for. But what if the information about that change in time unit i is distributed throughout the system much faster — even ‘almost instantaneously’, compared to the actual and possibly delayed substantial effects (e.g. ‘flows’) the diagram shows with its explicit links? Which might have the effect that actors, decision-makers concerned about variables elsewhere in the system for reasons unrelated to the problem at hand, might take ‘preventive’ steps that could change the expected simulated transformation? Of course, such actors and decision-makers are not shown…

Systems diagrams ordinarily do not acknowledge that — to the extent there are several parties involved in the project, and affected in different ways by either the initial problem situation or by proposed solutions and their outcomes — those different parties will have significantly different opinions about the issues arising in connection with all the system components, if the argumentation consultant manages to organize discussion. The system diagram only represents one participant’s view or perspective of the situation. It appears to assume that what ‘counts’ in making any decisions about the problem are only the factual, causal, functional relationships in the system, as determined by one (set of) model-builder. Thus, those responsible for making decisions about implementing the plan must rely on a different set of provisions and perspectives to convert the gained insights and ‘understanding’ of the system and its working into sound decisions.

Several types of theories and corresponding consultants are offering suggestions for how to do this. Given the particular way their expertise is currently brought into planning processes, they usually reflect just the main concerns of the clients they are working for. In business, the decision criterion is, obviously, the company’s competitive advantage resulting in reliable earnings: profit, over time. Thus for each ‘alternative’ plan considered (different initial settings in the system), and the actions and resources needed to achieve the desired OS, the ‘measure of performance’ associated with the resulting OS will be profit — earnings minus costs. For government consultants (striving to ‘run government like a business?’) the profit criterion may have to be labeled somewhat differently — say: ‘benefit’ and ‘cost’ of government projects, and their relationship such as B-C or the more popular B/C, the benefit-cost ratio. For overall government performance, the ‘Gross National Product’ GNP is the equivalent measure. The shortcomings and problems associated with such approaches led to calls for using ‘quality of life‘ or ‘happiness‘ or Human Development Indices instead, and criteria for sustainability and ecological aspects All or most such approaches still suffer from the shortcoming of constructing overall measures of performance: shortcomings because they inevitably represent only o n e view of the problems or projects — differences of opinion or significant conflicts are made invisible.

In the political arena, any business and economic considerations are overlaid if not completely overridden by the political decision criteria — voting percentages. Most clearly expressed in referenda on specific issues, alternatives are spelled out, more or less clearly, so as to require a ‘yes’ or ‘no’ vote, and the decision criterion is the percentage of those votes. Estimates of such percentages are increasingly produced by opinion surveys sampling just a small but ‘representative’ number of the entire population, and these aim to have a similar effect on decision-makers.

Both Systems Thinkers and advocates of the extended Argumentative Model are disheartened about the fact that in these business and governance habits, all the insight produced by their respective analysis efforts seem to have little if no visible connection with the simple ‘yes/no’, opinion poll or referendum votes. Rightfully so, and their concern should properly be with constructing better mechanisms for making that connection. From the Argumentative Model side, such an effort has been made with the proposed evaluation approach for planning arguments, though with clear warnings against using the resulting ‘measures’ of plan plausibility as convenient substitutes for decision criteria. The reasons for this have to do with the systemic incompleteness of the planning discourse: there is no guarantee that all the concerns that influence a person’s decision about a plan that should be given ‘due consideration — and therefore should be included in the evaluation — actually can and will be made explicit in the discussion.

To some extent, this is based on different attitudes discourse participants will bring to the process. The straightforward assumption of mutual trust and cooperativeness aiming at mutually beneficial outcomes — ‘win-win’ solutions — obviously does not apply to all such situations. Though there are many well-intentioned groups and initiatives that try to instill and grow such attitudes, especially when it comes to global decisions about issues affecting all humanity such as climate, pollution, disarmament, global trade and finance. The predominant business assumption is that of competition, seeing all parties as pursuing their own advantages at the expense of others, resulting in zero-sum outcomes: win-lose solutions. There are a number of different situations that can be distinguished as to whether the parties share or have different attitudes in the same discourse with the ‘extreme’ positions being complete sharing the same attitude, having attitudes on the opposite ends of the scale; or something in-between which might be called indifference to the other side’s concerns — as long as they don’t intrude on their own concerns, in which case the attitudes likely shift to the win-lose position at least for that specific aspect.

The effect of these issues can be seen by looking at the way a single argument about some feature of a proposed plan might be evaluated by different participants, and how the resulting assessments would change decisions. Consider, for the sake of simplicity, the argument in favor of a Plan A by participant P1:

D(PLAN A)! Position (‘Conclusion’) : Plan A ought to be adopted)

because

F((VA –>REL(VA–>VO) –> VO) | VC Premise 1: Variable V of plan A will result in (e.g. cause) Variable VO , given condition C;

and

D(VO) Premise 2: Variable VO ought to be aimed for;

and

F(VC) Premise 3: Variable VC is the case.

Participant P1 may be quite confident (but still open to some doubt) about these premises, and of being able to supply adequate evidence and support arguments for them in turn. She might express this by assigning the following plausibility values to them, on the plausibility scale of -1 to + 1, for example:

Premise 1: +0.9

Premise 2: +0.8

Premise 3: +0.9

One simple argument plausibility function (multiplying the plausibility judgments) would result in argument plausibility of +0.658; a not completely ‘certain’ but still comfortable result supporting the plan. Another participant P2 may agree with premises 1 and 2, assigning the same plausibility values to those as P1, but having considerable doubt as to whether the condition VC is indeed present to guarantee the effect of premise 1, expressed by the low plausibility score of +0.1 which would yield an argument plausibility of +0.07; a result that can be described as too close to ‘don’t know if VA is such a good idea’. If somebody else — participant P3 — disagrees with the desirability of VO, and therefore assigns a negative plausibility of, say, -0.5 to premise 2 while agreeing with P1 about the other premises, his result would be – 0.405, using the same crude aggregation formula. (These are of course up for discussion.) The issue of weight assignment has been left aside here, assuming only the one argument, so there is only one argument being considered and the weight of its deontic premise is 1, for the sake of simplicity. The difference in these assessments raises not only the question of how to obtain a meaningful common plausibility value for the group, as a guide for its decision. It might also cause P1 to worry whether P3 would consider taking ‘corrective’ (in P1’s view ‘subversive’?) actions to mitigate the effect of VA should the plan be adopted e.g. by majority rule, or by following the result of some group plan plausibility function such as taking the average of the individual argument plausibility judgments as a decision criterion. (This is not recommended by the theory). And finally: should these assessments, with their underlying assumptions of cooperative, competitive, or neutral, disinterested attitudes, and the potential actions of individual players in the system to unilaterally manipulate the outcome, be included in the model and its diagram or map?

While a detailed investigation of the role of these attitudes on cooperative planning decision-making seems much needed, this brief overview already makes it clear that there are many situations in which participants have good reasons not to contribute complete and truthful information. In fact, the prevailing assumption is that secrecy, misrepresentation, misleading and deceptive information and corresponding efforts to obtain such information from other participants — spying — are part of the common ‘business as usual’.

So how should systems models and diagrams deal with these aspects? The ‘holistic’ claim of showing all elements so as to offer a complete picture and understanding of a system arguably would require this: ‘as completely as possible’. But how? Admitting that a complete understanding of many situations actually is not possible? What a participant does not contribute to the discourse, the model diagram can’t show. Should it (cynically?) announce that such ‘may’ be the case — and that therefore participants should not base their decisions only on the information it shows? To truly ‘good faith’ cooperative participants, sowing distrust this way may be perceived as somewhat offensive, and itself actually interfere with the process.

The work on systems modeling faces another significant unfinished task here. Perhaps a another look at the way we are making decisions as a result of planning discussions can help somewhat.

The discussion itself assumes that it is possible and useful towards better decisions — presumably, better than decisions made without the information it produces. It does not, inherently, condone the practice of sticking to a preconceived decision no matter what is being brought up (nor the arrogant attitude behind it: ‘my mind is made up, no matter what you say…’) The question has two parts. One is related to the criteria we use to convert the value of information to decisions. The other concerns the process itself: the kinds of steps taken, and their sequence.

It is necessary to quickly go over the criteria issue first — some were already discussed above. The criteria for business decision-makers discussed above, that can be assumed to be used by the single decision-maker at the helm of a business enterprise (which of course is a simplified picture): profit, ROI, and its variants arising from planning horizon, sustainability and PR considerations, are single measures of performance attached to the alternative solutions considered: the rule for this decision ‘under certainty’ is: select the solution having the ‘best’ (highest, maximized) value. (‘Value’ here is understood simply as the number of the criterion.) That picture is complicated for decision situations under risk, where outcomes have different levels of probability, or complete uncertainty, where outcomes are not governed by predictable laws, nor even probability, but by other participants’ possible attempts to anticipate the designer’s plans, and will actively seek to oppose them. This is the domain of decision and game theory, whose analyses may produce guidelines and strategies for decisions — but again, different decisions or strategies for different participants in the planning. The factors determining these strategies are arguably significant parts of the environment or context that designers must take into account — and systems models should represent — to produce a viable understanding of the problem situation. The point to note is that the systems models permit simulation of these criteria — profit, life cycle economic cost or performance, ecological damage or sustainability — because they are single measures, presumably collectively agreed upon (which is at least debatable). But once the use of plausibility judgments as measures of performance is considered as a possibility, — even as aggregated group measures — the ability of systems models and diagrams to accommodate them becomes very questionable, to say the least. It would require the input of many individual (subjective) judgments, which are generated as the discussion proceeds, and some of which will not be made explicit even if there are methods available for doing this.

This shift of criteria for decision-making raises the concerns about the second question, the process: the kinds of steps taken, by what participants, according to what rules, and their sequence. If this second aspect does not seem to need or require much attention — the standard systems diagrams again do not show it — consider the significance given to it by such elaborate rule systems as parliamentary procedure, ‘rules of order’ volumes, even for entities where the criterion for decisions is the simple voting percentage. Any change of criteria will necessarily have procedural implications.

By now, the systems diagram for even the simple three-variable system we started out with has become so complex that it is difficult to see how it might be represented in a diagram. Adding the challenges of accounting for the additional aspects discussed above — the discourse with controversial issues, the conditions and subsequent causal and other relationships of plan implementation requirements and further side-effects, and the attitudes and judgments of individual parties involved in and affected by the problem and proposed plans, are complicating the modeling and diagram display tasks to an extent where they are likely to lose their ability to support the process of understanding and arriving at responsible decisions; I do not presume to have any convincing solutions for these problems and can only point to them as urgent work to be done.

Evolving ‘map’ of ‘system’ elements and relationships, and related issues

Meanwhile, from a point of view of acknowledging these difficulties but trying, for now, to ‘do the best we can with what we have’, it seems that systems models and diagrams should continue to serve as tools to understand the situation and to predict the performance of proposed plans — if some of the aspects discussed can be incorporated into the models. The construction of the model must draw upon the discourse that elicits the pertinent information (through the ‘pros and cons’ about proposal). The model-building work therefore must accompany the discourse — it cannot precede or follow the discussion as a separate step. Standard ‘expert’ knowledge based analysis — conventional ‘best practice’ and research based regulations, for example, will be as much a part of this as the ‘new’, ‘distributed’ information that is to be expected in any unprecedented ‘wicked’ planning problem, that can only be brought out in the discourse with affected parties.

The evaluation preparing for decision — whether following a customary formal evaluation process or a process of argument evaluation — will have to be a separate phase. Its content will now draw upon and reflect the content of the model. The analysis of its results — identifying the specific areas of disagreement leading to different overall judgments, for example — may lead to returning to previous design and model (re-)construction stages: to modify proposals for more general acceptability, or better overall performance, and then return to the evaluation stage supporting a final decision. Procedures for this process have been sketched in outline but remain to be examined and refined in detail, and described concisely so that they can be agreed upon and adopted by the group of participants in any planning case before starting the work, as they must, so that quarrels about procedure will not disrupt the process later.

Looking at the above map again, another point must be made. It is that once again, the criticism of systems diagrams seems to have been ignored, that the diagram still only expresses one person’s view of the problem. The system elements called ‘variables’, for example, are represented as elements of ‘reality’, and the issues and questions about those expected to give ‘real’ (that is, real for all participants) answers and arguments. Taking the objection seriously, would we not have to acknowledge that ‘reality’ is known to us only imperfectly, if at all, and that each of us has a different mental ‘map’ of it? Thus, each item in the systems map should perhaps be shown as multiple elements referring to the same thing labeled as something we think we know and agree about: but as one bubble of the item for each participant in the discourse? And these bubbles will possibly, even likely, not being congruent but only overlapping, at best, and at worst covering totally different content meaning — the content that is then expected to be explained and explored in follow-up questions? Systems Thinking has acknowledged this issue in principle — that ‘the map (the systems model and diagram) is NOT the landscape‘ (the reality). But this insight should itself be represented in a more ‘realistic’ diagram — realistic in the sense that it acknowledges that all the detail information contributed to the discourse and the diagram will be assembled in different ways by each individual into different, only partially overlapping ‘maps’. An objection might be that the system model should ‘realistically’ focus on those parts of reality that we can work with (control? or at least predict?) — with some degree of ‘objectivity’ — the overlap we strive for with ‘scientific’ method of replicable experiments, observations, measurements, logic, statistical conformation? And that the concepts different participants carrying around in the minds to make up their different maps are just ‘subjective’ phenomena that should ‘count’ in our discussions about collective plans only to the extent they correspond (‘overlap’) to the objective measurable elements of our observable system? The answer is that such subjective elements as individual perspectives about the nature of the discourse as cooperative or competitive etc. are phenomena that do affect the reality of our interactions. Mental concepts are ‘real’ forces in the world — so should they not be acknowledged as ‘real’ elements with ‘real’ relationships in the relationship network of the system diagram?

We could perhaps state the purpose of the discourse as that of bringing those mental maps into sufficiently close overlap for a final decision to become sufficiently congruent in meaning and acceptability for all participants: the resulting ‘maps’ along the way having a sufficient degree of overlap. What is ‘sufficient’ for this, though? And does that apply to all aspects of the system? Are not all our plans in part also meant to help us to pursue our own, that is: our different versions of happiness? We all want to ‘make a difference’ in our lives — some more than others, of course — and each in our own way. The close, complete overlap of our mental maps is a goal and obsession of societies we call ‘totalitarian’. If that is not what we wish to achieve, should the principle of plan outcomes leaving and offering (more? better?) opportunities for differences in the way we live and work in the ‘ought-state’ of problem solutions, be an integral element of our system models and diagrams? Which would be represented as a description of the outcome consisting of ‘possibility’ circles that have ‘sufficient’ overlap, sure, but also a sufficient degree of non-overlap ‘difference’ opportunity outside of the overlapping area. Our models and diagrams and system maps don’t even consider that. So is Systems Thinking, proudly claimed as being ‘the best foundation for tackling societal problems’ by the Systems Thinking forum, truly able to carry the edifice of future society yet? For its part, the Argumentative Model claims to accommodate questions of all kinds of perspectives, including questions such as these, — but the mapping and decision-making tools for arriving at meaningful answers and agreements are still very much unanswered questions. The maps, for all their crowded data, have large undiscovered areas.

The emerging picture of what a responsible planning discourse and decision-making process for the social challenges we call ‘wicked problems’, would look like, with currently available tools, is not a simple, reassuring and appealing one. But the questions that have been raised for this important work-in-progress, in my opinion, should not be ignored or dismissed because they are difficult. There are understandable temptations to remain with traditional, familiar habits — the ones that arguably often are responsible for the problems? — or revert to even simpler shortcuts such as placing our trust in the ability and judgments of ‘leaders’ to understand and resolve tasks we cannot even model and diagram properly. For humanity to give in to those temptations (again?) would seem to qualify as a very wicked problem indeed.

—

Notes:

1 The understanding of ‘systems thinking’ (ST) here is based on the predominant use of the term in the ‘Systems Thinking World’ Network on LinkedIn.

2 The Argumentative Model (AM) of Planning was proposed by H. Rittel, e.g. in the paper ‘APIS: A Concept for an Argumentative Planning Information System’, Working paper 324, Institute of Urban and Regional Development, University of California, 1980. It sees the planning activity as a process in which participants raise issues – questions to which there may be different positions and opinions, and support their positions with evidence, answers and arguments. From the ST point of view, AM might just be considered a small, somewhat heretic sect within ST…

0 Responses to “Systems Models and Argumentation in the Planning Discourse”