Thorbjørn Mann, April 2020

WEIGHTING: ‘WEIGHING THE PROS AND CONS’

Concepts and Rationale

Much of the discussion, and examples in the preceding sections may seem to have taken the assumption of weighting for granted: aspects in formal evaluation procedures, or deontic (ought-) claims in arguments. The entire effort of designing a better platforms and procedures for public planning discourse is focused in part on exploring how the common phrase of “carefully weighing the pros and cons” in making decisions about plans could be supported by specific explanations of what it means and, more importantly, how it would be done in detail. Within the perspectives of formal evaluation or assessment of planning arguments (See previous posts on formal evaluation procedures and evaluation of planning argument), the question of ‘why’ appears not to require much justification: It seems almost self-evident that some of the various pro and con arguments carry more ‘weight’ in influencing the decision than others: Even if there is only one ‘pro’ and one ‘con’, shouldn’t the decision depend on which argument is the more ‘weighty’ one?

The allegorical figure of Justice carries a balance for weighing the evidence of opposing legal arguments. (Curiously: the blindfolded lady is supposed to make her decision on the heavier weight, not on the social status or power or wealth of the arguing parties, but not even to see the tilt?) Of the many evaluation aspects of formal evaluation procedures, there may be some that really don’t ‘matter’ much to any of the parties affected by the problem or the proposed solution that must be decided upon. Decision-makers making decisions on behalf of others can (should?) be asked asked to explain their basis of judgment. Wouldn’t their answer be considered incomplete without some mention of which aspects carry more weight than others in their decision?

While it does not seem that many such questions are asked (perhaps because the questioners are used to not getting very satisfactory answers?), there is no lack of advice for evaluators about how they might express this weighting process. For example, how to assign a meaningful set of weights to different aspects and sub-aspects in an evaluation aspects ‘tree’. But the process is often considered cumbersome enough to tempt participants to skip this added complication of making such assignments, and and instead raising questions of ‘what difference does it make?’, whether it is really necessary, or how meaningful the different techniques for doing this really are. And there are significant approaches to design and planning that propose to do entirely without recourse to explicit ‘pro and con’ weighting.

Finally, there are significant approaches to design and planning that propose to do entirely without recourse to explicit ‘pro and con’ weighting. Among these are the familiar traditions of voting, decision rules of ‘taking the sense’ of the discussion by a facilitator in the pursuit of consensus or the appearance of consensus or consent, upon more or less organized and thorough discussion, during which the weight or relevance, significance of the different discussion entries is assumed to have been sufficiently well articulated. Another is the method of sequential elimination of solution alternatives (for example by voting ‘out’, not ‘in’) until there is only one alternative left. A fundamentally different method is that of generating the plan or solution from elements (or according to accepted rules) that have been declared valid by authority, theory, or tradition, which are assumed to ‘guarantee’ that the outcome will also be good, valid, beautiful etc.

Since the issue of evaluation is somewhat confused by being discussed in various different terms: ‘weights of relative importance’; ‘priorities’, ‘relevance’, ‘principles’, ‘preferences’; ‘significance’, ‘urgency’, and there are yet unresolved questions within each of the major approaches, some exploration of the issue seems in order: to revive what looks at this point as a needed, unfinished discussion.

Figure 1 — Weighting in planning evaluation: overview

Different ways of dealing with the ‘weighting’ issue

Principle

A first, simple form of expressing opinions about importance is the use of principles in the considerations about a plan. A principle (understood as not only the ‘first’ and foremost consideration but a kind of ‘sine qua non’ or ‘non-negotiable’ condition) can be used to decide whether or not a proposed plan meets the condition of the principle, and eliminate it from further consideration if it doesn’t. Principles can be lofty philosophical or moral tenets, or simple pragmatic rules such as ‘must meet applicable governmental laws and regulations to get the permit’ — regardless of whether a proposed plan might be further refined or modified to meet those regulations, or an exemption be negotiated based on unusual considerations. If there are several alternative proposals to be evaluated, this usually requires several ’rounds’ of successive elimination identifying ‘admissible’, ‘semi-finalist’, ‘finalist’ contenders up to the determination of the winning entry, by means of one of the ‘decision criteria’ such as simple majority voting — which here would be not ‘voting ‘in’ for adoption or further consideration, but voting ‘out’.

Weight ‘grouping’

A more refined approach that considers evaluation aspects of different degrees of importance is that of assigning those aspects to a few groups of importance, such as ‘highly important’; ‘important’ and ‘less important’, ‘unimportant’, ‘optional’ and ‘unimportant’, perhaps assigning aspects in these groups ‘weights’ such as ‘4’, ‘3’, ‘2’, ‘1’ and ‘0’, respectively, to be multiplied with a ‘quality’ or ‘degree of performance’ judgment score before being added up. The problem with this approach can be seen by considering the extreme possibility of somebody assigning all aspects the highest category of ‘highly important’; in effect making all aspects ‘equally important’ — for n aspects each one contributing 1/n weight to the overall judgment.

Ranking and preference

The approach of arranging or ‘ranking’ things in the order of preference (on the ordinal scale) can be applied to the set of alternatives to be evaluated as well as to the aspects to be used in the evaluation. Decision-making by preference ranking — e.g. for the election of candidates for public office — has been studied more extensively, e.g. by Arrow [1], finding unsurmountable problems for decision-making by different parties, due mainly to ‘paradoxical’ transitivity issues. Simple ranking does not recognize measurable performance (measurable on a ratio or difference scale) where this is applicable, making a coherent ‘quality’ evaluation approach based only on preference ranking impossible.

An interesting variation of this approach is an approach for deciding whether a proposal should be rejected or accepted, attributed to Benjamin Franklin. It consists of listing the pros and con arguments in separate columns on a sheet of paper, then looking for pairs of pros and cons that seem to be equally important, and striking those two arguments out. The process is continued until only one argument, or one pair, is left; if this, or the weightier one of two is a ‘pro’ argument, the decision will be in favor of the proposal, if it is a ‘con’ argument, the decision should be rejection. It is not clear how this process can be applied to group decision-making without recourse to other methods of dealing with different outcomes by different parties,such as voting.

Interestingly, preference or importance comparison is often suggested as a preliminary step towards developing a more thoroughly considered set of weightings in the next level:

Weights of relative importance

As indicated above, the technique of assigning ‘weights of relative importance’ to the aspects on each ‘branch’ of evaluation aspect trees has been part of formal evaluation techniques such as the Musso-Rittel procedure [2] for buildings (discussed in previous posts) as well as in proposals for systematic evaluation of pro/con arguments [5]. These weights of relative importance — expressed on a scale of zero to 1 (or zero to 100), subject to the condition that all weights on the respective level must add up to 1 (or 100, respectively), indicate the evaluator’s judgment about ‘how much’ (by what percentage or fraction) of the overall judgment each single aspect judgment should determine the overall judgment. In this view, the use of the ‘principle’ approach above can be seen as simply assigning the full weight of 1.0 or 100% to the one of the aspects expressed in the discussion that the evaluator considers a principle — , overriding all other consideration aspects.

To some, the resulting set of weights may seem somewhat arbitrary. The task of having to adjust the weights to meet the condition of adding up to 1 or 100 can be seen as a nudge to get evaluators to more carefully consider these judgments, not just arbitrarily assign meaningless weights: To make one aspect more important (by assigning it a higher weight), that added weight must be ‘taken away’ from other aspects.

Arbitrariness can also be reduced by using the Ackoff technique [3] of generating a set of weights that can be seen as ‘approximately’ representing a person’s true valuation. It consists of ranking the aspects and assigning numbers (on no particular scale) and then comparing each pair of aspects, deciding which one is more important than the other, and adjusting the numbers accordingly, until a set of numbers is achieved that ‘approximately’ reflects a evaluator’s ‘true’ valuation. To make this set comparable to other participants’ weighting, (so that the numbers carry the same ‘meaning’ to all participants), it must then be ‘normalized’ by dividing each number by the total, getting the set back to adding up to +1 (or 100). Displaying these results for discussion will further reduce arbitrariness. This can actually induce participants to change their weightings to reflect recognition and (empathy) accommodation for others’ concerns that they had not recognized in their own first assignments. Of course, the discussion requires that the weighting is made explicit.

Taking the ‘test’ of deliberation seriously — of enabling a person A to make judgments on behalf of another person B — this can now be seen to require that A could show how A can use not only the set of aspects and the criterion functions but also B’s weight assignments for all aspects and sub-aspects etc., and of course the same aggregation function, resulting in the overall judgment that B would have made. It likely would be different from A’s own judgment using her own set of aspects, criteria, criterion functions and weighting. The technique using weights of relative importance thus looks like the most promising one for meeting this test. By extension, to the extent societal or government regulations are claimed to be representative of the community’s values, what would be required to demonstrate even approximate closeness of the underlying valuation?

Approaches avoiding formal evaluation

The discussion of weighting would be incomplete without mentioning some examples of approaches that entirely sidestep the issue of evaluation of plans of the ‘formal evaluation’ kind and others using weighting. One is the well know Benefit-Cost Analysis, the other relies on the process of generating a plan following a procedure or theory that has been accepted as valid and guaranteeing the validity or quality of the resulting design or plan or policy.

Expressing weights in money: Benefit-Cost Analysis

The Benefit-Cost Analysis is based on the fact that the implementation of most plans will cost money — cost of course being the main ‘con’ criterion for some decision-making entities. So the entire question of value and value differences is turned into the ‘objective’ currency of money: are the benefits (the ‘pros’) we expect from the project worth the cost (and other ‘cons’)? This common technique is mandatory for many government projects and policies. It has been so well described as well as criticized in the literature, that it does not need a lengthy treatment here; though some critical questions it shares with other approaches will be discussed below.

Generating plans by following a ‘valid’ theory or custom

Approaches that can be described as ‘generative’ design or planning processes rely on the assumption that following the steps of a valid theory or using rules and elements that constitute the whole ‘solution’, (elements that have been determined as ‘valid’) to construct the plan, will thereby guarantee its overall validity or quality. Thus, there is no need to engage in a complicated evaluation at the end of that process. Christopher Alexander’s ‘Pattern Language’ [4] for architecture and urban design is a main recent example of such approaches — though it can be argued that it is part of a long tradition of similar efforts of rules or pattern books for proper building, going back to antiquity — either as cultural traditions know to the community or as ‘secrets’ of the profession. He claims that following this ‘timeless way’ of building “frees you from all method”.

However, the argument that the individual patterns and rules for connecting these elements into the overall design somehow ‘guarantee’ the validity and quality of the overall design (if followed properly) merely shifts the issue of evaluation back to the task of identifying valid patterns and relationship rules. This is discussed — if at all — in very different language, and often simply posited by the authority of tradition (‘proven by experience’) or — as in the Pattern Language — of its developer Alexander or followers writing patterns languages for different domains such as computer programming, ‘social transformation’, or composing music. To the best of my knowledge, the evaluation tools used in that process remain to be studied and made explicit. The discussion of this issue is somewhat more difficult than necessary because of Alexander’s claim that the quality of patterns — their beauty, value, ‘aliveness’ — is ‘a matter of objective fact’.

Do Weighing methods make a difference?

A question that is likely to arise in a project whose participants are confronted with the task of evaluating proposed plans, and therefore having to choose the evaluation method they will use, is whether this choice will make a significant difference in the final judgment. The answer is that it definitely will, but the extent of difference will depend on the context and circumstances of each project — especially if there are significant differences of opinion in the affected community. The trouble is that the extent of such differences can only be seen by actually using some of the more detailed techniques for a given project, and comparing the decision outcomes; an effort unlikely to be taken in a situation where the question of whether one technique is ‘worth the effort’ at all.

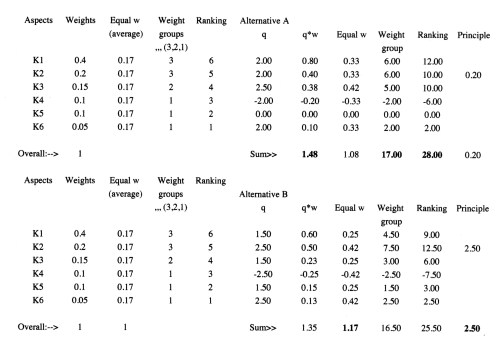

The table below shows a very simple example of such a comparison. For the stated assumptions of a few evaluation aspects and weighting assignments, the different ways of dealing with the weighting issue actually will yield different final plan decisions. This crude example cannot, of course, provide any general guidelines for choosing the tools to use in any specific project. The above list and discussion of policy decision options can at best become part of a ‘toolkit’ from which the participants in each project can choose to construct the approach they consider most suitable for their situation.

Table 1 Comparison of the effect of different weighting approaches

The ‘weights of relative importance’ form of dealing with the issue of different degrees of importance in the evaluation considerations is used both in the formal evaluation procedures oft the Musso-Rittel type and, in adaptation, in the argument evaluation approach for planning arguments [5]; It may be considered most useful for approximately representing different bases of judgment. However, even for that purpose, there are some questions — for all these forms — that need more exploration and discussion.

Questions and Issues for further discussion

Apart from the question whether the apparent conflict between evaluation techniques using weighting approaches, and those avoiding evaluation and thus weighting at all, can be settled, there are some issues about weighting itself that require more discussion. They include contingency questions: about the stability of weight assignments over time and different, changing context conditions, their applicability at different phases of the planning process, and the possibilities (opportunities) for manipulation through bias adjustments between weights of aspects and the steepness (severity) of criterion functions for those aspects.

The relationship between weighting and the steepness of criterion functions

A perhaps minor detail is the relationship between the weight assignments of evaluation aspects, and the criterion functions for that aspect, in a person’s ‘evaluation model’. A steep criterion function curve can have the same effect as a higher weight for the aspect in question. To some extent, making both the weighting and criterion functions of all participants explicit and visible for discussion in a particular project may help to counteract undue use of this effect, e.g. by asking where the criterion function should cross the ‘zero’ judgment (‘so-so, neither good nor bad but anything above that line still acceptable’) and thus prevent extreme severity of judgments. This would assume considerable sophistication on the part of individuals attempting such distortion and of other participants in the discourse to detect and deal with it. But both in personal assessments and in efforts to define common social evaluations (regulations) expressed in terms of criterion functions such as e.g. implied by the suggestions in [8] there remains a potential for manipulation that at the very least should encourage great caution in accepting evaluation results as direct decision criteria.

Tentative conclusions and outlook

These issues suggest that it is far from clear whether they can eventually be settled in favor of one or the other view. What does this mean for the the concern triggering this investigation, to explore what provisions should be made for the evaluation task in the design of a public planning platform? Any attempt to pre-empt the decision, by mandating one specific approach or technique should be avoided, to prevent it from itself becoming an added controversy distracting from the task of developing a good plan. So given the current state of the discussion, for the time being, should the platform offer just participants information — a ‘toolkit — about the possible techniques at their disposal? Can ‘manuals’ with guidance for their application, and perhaps suggestions for circumstances in the context or the nature of the problem, offer discourse participants in projects with wide, even global participation adequate guidance for their use? Or will it take more general education to prepare the public for adequately informed and meaningful participation?

The emerging complexity of the issues discovered about even this minor component of the evaluation question could encourage opponents of these cumbersome procedures. Are calls for stronger leadership (from groups asking for leadership with systems thinking, better ‘awareness’ of ‘holistic’, ecological, social inequality issues, or other moral qualities actually indicators of public unwillingness to engage in thorough evaluation of the public planning decisions we are facing? Or just inability to do so? Inability caused perhaps by inadequate education for such issues, compounded by inadequate information and lack of accessible and workable platforms for carrying out the needed discussions and judgments? Or is there also some power desire at play, for such groups to themselves become those leaders, empowered to make decisions for the ‘common good’?

Notes, References

[1] Kenneth J. Arrow, 1951, 2nd ed., 1963. Social Choice and Individual Values, Yale University Press.

[2] Musso, A. and Horst Rittel: “Über das Messen der Güte von Gebäuden” In “Arbeitsberichte zur Planungsmethodik‘, Krämer, Stuttgart 1971.

[3] Ackoff, Russel: “Scientific Method” , John Wiley & Sons 1962.

[4] Alexander, Christopher: “A Pattern Language“, Oxford University Press, 1977.

[5] Mann, T: ‘The Fog Island Argument’ XLibris, 2009, or “The Structure and Evaluation of Planning Arguments” , INFORMAL LOGIC, Dec. 2010.

[6] Mann, T.: “Programming for Innovation: The Case of the Planning for Santa Maria del Fiore in Florence”. Paper presented at the EDRA (Environmental Design Research Association) Meeting, Black Mountain, 1989. Published in DESIGN METHODS AND THEORIES, Vol 24, No. 3, 1990. Also: Chapter 16 in “Rigatopia — the Tavern Discussions“, Lambert Academic Publication 2015.

[7] Mann, T: “Time Management for Architects and Designers” W. Norton, 2003.

[8] “Die Methodische Bewertung: Ein Instrument des Architekten. Festschrift für Professor Arne Musso zum 65. Geburtstag“, Technische Universität Berlin, 1993; Also: Höfler, Horst: Problem-Darstellung und Problem-Lösung in der Bauplanung. IGMA-Dissertationen 3, Universität Stuttgart 1972.

–o–

Correction: The technique for developing approximate weights of relative importance cited as ‘Ackoff approximate measure’ in the source ‘Scientific Method’ by Ackoff should be “Churchman-Ackoff Approximate Measure of Value” Page It is an expanded version of an ‘Ackoff Approximate Measure of Value’ in earlier publications, one of several approaches to the same basic task discussed there. The description is somewhat simplified in that it only considers comparisons of single pairs of objectives / aspects, not comparisons of single aspects of groups of other aspects.